-

The Role of Haptics in Training and Games for Hearing-Impaired Individuals: A Systematic Review

The Role of Haptics in Training and Games for Hearing-Impaired Individuals: A Systematic Review -

Optical Rules to Mitigate the Parallax-Related Registration Error in See-Through Head-Mounted Displays for the Guidance of Manual Tasks

Optical Rules to Mitigate the Parallax-Related Registration Error in See-Through Head-Mounted Displays for the Guidance of Manual Tasks -

Applying Cognitive Load Theory to eLearning of Crafts

Applying Cognitive Load Theory to eLearning of Crafts -

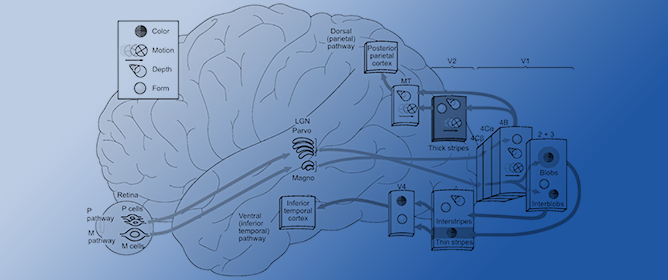

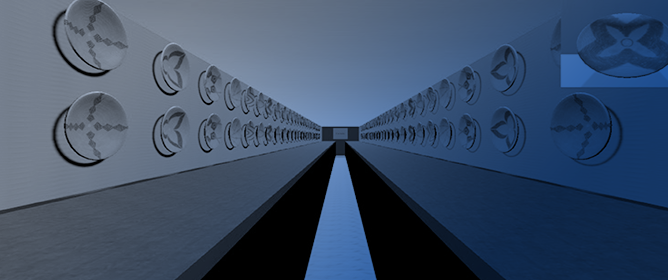

Optimal Stimulus Properties for Steady-State Visually Evoked Potential Brain–Computer Interfaces: A Scoping Review

Optimal Stimulus Properties for Steady-State Visually Evoked Potential Brain–Computer Interfaces: A Scoping Review -

Virtual Reality Assessment of Attention Deficits in Traumatic Brain Injury: Effectiveness and Ecological Validity

Virtual Reality Assessment of Attention Deficits in Traumatic Brain Injury: Effectiveness and Ecological Validity

Journal Description

Multimodal Technologies and Interaction

Multimodal Technologies and Interaction

is an international, peer-reviewed, open access journal on multimodal technologies and interaction published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Inspec, dblp Computer Science Bibliography, and other databases.

- Journal Rank: CiteScore - Q2 (Computer Science Applications)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 14 days after submission; acceptance to publication is undertaken in 3.8 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

2.5 (2022)

Latest Articles

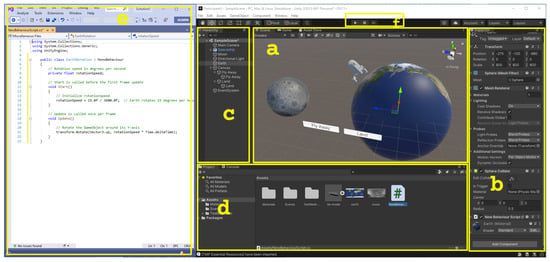

How New Developers Approach Augmented Reality Development Using Simplified Creation Tools: An Observational Study

Multimodal Technol. Interact. 2024, 8(4), 35; https://doi.org/10.3390/mti8040035 - 22 Apr 2024

Abstract

Software developers new to creating Augmented Reality (AR) experiences often gravitate towards simplified development environments, such as 3D game engines. While popular game engines such as Unity and Unreal have evolved to offer extensive support and functionalities for AR creation, many developers still

[...] Read more.

Software developers new to creating Augmented Reality (AR) experiences often gravitate towards simplified development environments, such as 3D game engines. While popular game engines such as Unity and Unreal have evolved to offer extensive support and functionalities for AR creation, many developers still find it difficult to realize their immersive development projects. We ran an observational study with 12 software developers to assess how they approach the initial AR creation processes using a simplified development framework, the information resources they seek, and how their learning experience compares to the more mainstream 2D development. We observed that developers often started by looking for code examples rather than breaking down complex problems, leading to challenges in visualizing the AR experience. They encountered vocabulary issues and found trial-and-error methods ineffective due to a lack of familiarity with 3D environments, physics, and motion. These observations highlight the distinct needs of emerging AR developers and suggest that conventional code reuse strategies in mainstream development may be less effective in AR. We discuss the importance of developing more intuitive training and learning methods to foster diversity in developing interactive systems and support self-taught learners.

Full article

(This article belongs to the Special Issue 3D User Interfaces and Virtual Reality)

►

Show Figures

Open AccessArticle

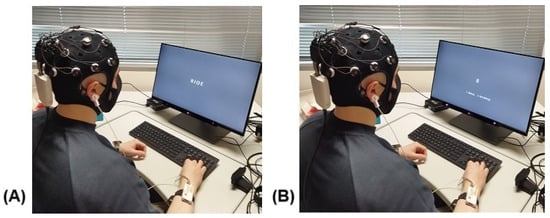

EEG, Pupil Dilations, and Other Physiological Measures of Working Memory Load in the Sternberg Task

by

Mohammad Ahmadi, Samantha W. Michalka, Marzieh Ahmadi Najafabadi, Burkhard C. Wünsche and Mark Billinghurst

Multimodal Technol. Interact. 2024, 8(4), 34; https://doi.org/10.3390/mti8040034 - 19 Apr 2024

Abstract

►▼

Show Figures

Recent evidence shows that physiological cues, such as pupil dilation (PD), heart rate (HR), skin conductivity (SC), and electroencephalography (EEG), can indicate cognitive load (CL) in users while performing tasks. This paper aims to investigate physiological (multimodal) measurement of CL in a Sternberg

[...] Read more.

Recent evidence shows that physiological cues, such as pupil dilation (PD), heart rate (HR), skin conductivity (SC), and electroencephalography (EEG), can indicate cognitive load (CL) in users while performing tasks. This paper aims to investigate physiological (multimodal) measurement of CL in a Sternberg memory task as the difficulty level increases in both maintenance and probe phases. For this purpose, we designed a Sternberg memory test with four levels of difficulty determined by the number of letters in the words that need to be remembered. Our behavioral performance results show that the CL of the task is related to the number of letters in non-semantic words, which confirms that this task serves as an appropriate metric of CL (the task difficulty increases as the number of letters in words increases). We were interested in investigating the suitability of multimodal physiological measures as correlates of four CL levels for both the maintenance and probe phases in the Sternberg memory task. Our motivation was to: (1) design and create four levels of task difficulty with a gradual increase in CL rather than just high and low CL, (2) use the Sternberg test as our test bed, (3) explore both the maintenance and probe phases for measurement of CL, and (4) explore the correlation of physiological cues (PD, HR, SC, EEG) with CL in both phases. Testing with the system, we found that for both the maintenance and probe phases, there was a significant positive linear relationship between average baseline corrected PD and CL. We also observed that the average baseline corrected SC showed significant increases as the number of letters in the words increased for both the maintenance and probe phases. However, the HR analysis did not show any correlation with an increase in CL in either of the maintenance or probe phases. An additional analysis was conducted to investigate the correlation of these physiological signals for high (seven-letter words) versus low (four-letter words) CL loads. Our EEG analysis for the maintenance phase found significant positive linear relationships between the power spectral density (PSD) and CL for the upper alpha bands in the centrotemporal, frontal, and occipitoparietal regions of the brain and significant positive linear relationships between the PSD and CL for the lower alpha band in the frontal and occipitoparietal regions. However, our EEG analysis of the probe phase did not show any linear relationship between the PSD and CL in any region. These results suggest that PD, SC, and EEG could be used as suitable metrics for the measurement of cognitive load in Sternberg memory tasks. We discuss this, limitations of the study, and directions for future work.

Full article

Figure 1

Open AccessArticle

The Effect of Culture and Social-Cognitive Characteristics on App Preference and Willingness to Use a Fitness App

by

Kiemute Oyibo and Julita Vassileva

Multimodal Technol. Interact. 2024, 8(4), 33; https://doi.org/10.3390/mti8040033 - 17 Apr 2024

Abstract

►▼

Show Figures

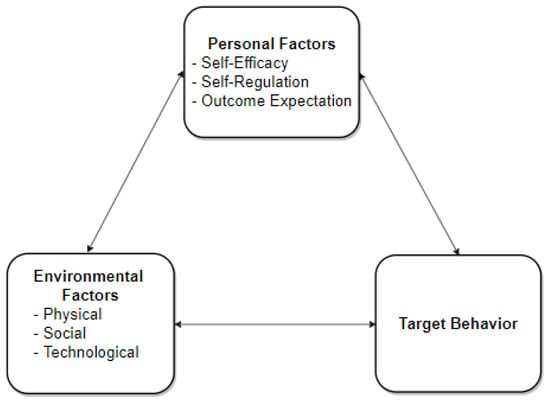

Fitness apps are persuasive tools developed to motivate physical activity. Despite their popularity, there is little work on how social-cognitive characteristics such as culture, household size, physical activity level, perceived self-efficacy and social support influence users’ willingness to use them and preference (personal

[...] Read more.

Fitness apps are persuasive tools developed to motivate physical activity. Despite their popularity, there is little work on how social-cognitive characteristics such as culture, household size, physical activity level, perceived self-efficacy and social support influence users’ willingness to use them and preference (personal vs. social). Knowing these relationships can help developers tailor fitness apps to different socio-cultural groups. Hence, we conducted two studies to address the research gap. In the first study (n = 194) aimed at recruiting participants for the second study, we asked participants about their app preference (personal vs. social), physical activity level and key demographic variables. In the second study (n = 49), we asked participants about their social-cognitive beliefs about exercise and their willingness to use a fitness app (presented as a screenshot). The results of the first study showed that, in the collectivist group (Nigerians), people in large households were more likely to be active and use the social version of a fitness app than those in small households. However, in the individualist group (Canadians/Americans), neither the preference for the social or personal version of a fitness app nor the physical activity level depended on the household size. Moreover, in the second study, in the individualist model, perceived self-efficacy and perceived self-regulation have a significant total effect on willingness to use a fitness app. However, in the collectivist model, perceived social support and outcome expectation have a significant total effect on the target construct. Finally, we found that females in individualist cultures had higher overall social-cognitive beliefs about exercise than males in individualist cultures and females in collectivist cultures. The implications of the findings are discussed.

Full article

Figure 1

Open AccessSystematic Review

A Comparison of Parenting Strategies in a Digital Environment: A Systematic Literature Review

by

Leonarda Banić and Tihomir Orehovački

Multimodal Technol. Interact. 2024, 8(4), 32; https://doi.org/10.3390/mti8040032 - 12 Apr 2024

Abstract

►▼

Show Figures

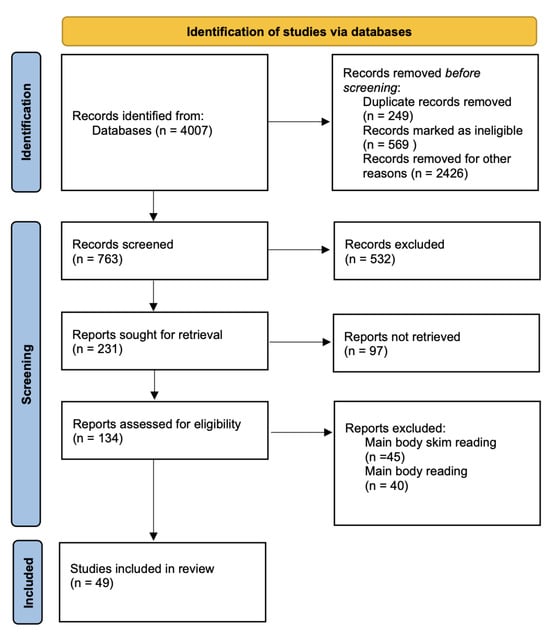

In the modern digital landscape, parental involvement in shaping children’s internet usage has gained unprecedented importance. This research delves into the evolving trends of parental mediation concerning children’s internet activities. As the digital realm increasingly influences young lives, the role of parents in

[...] Read more.

In the modern digital landscape, parental involvement in shaping children’s internet usage has gained unprecedented importance. This research delves into the evolving trends of parental mediation concerning children’s internet activities. As the digital realm increasingly influences young lives, the role of parents in guiding and safeguarding their children’s online experiences becomes crucial. The study addresses key research questions to explore the strategies parents adopt, the content they restrict, the rules they establish, the potential exposure to inappropriate content, and the impact of parents’ computer literacy on their children’s internet safety. Additionally, the research includes a thematic question that broadens the analysis by incorporating insights from studies not directly answering the primary questions but contributing valuable context and understanding to the digital parenting arena. Building on this, the findings from a systematic literature review, conducted in accordance with the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines, highlight a shift towards more proactive parental involvement. Incorporating 49 studies from 11 databases, these findings reveal the current trends and methodologies in parental mediation. Active mediation strategies, which involve positive interactions and discussions about online content, are gaining recognition alongside the prevalent restrictive mediation approaches. Parents are proactively forbidding specific internet content, emphasizing safety and privacy concerns. Moreover, the emergence of parents’ computer literacy as a significant factor influencing their children’s online safety underlines the importance of digital proficiency. By shedding light on the contemporary landscape of parental mediation, this study contributes to a deeper understanding of how parents navigate their children’s internet experiences and the challenges they face in ensuring responsible and secure online engagement. The implications of these findings offer valuable insights for both practitioners and researchers, emphasizing the need for active parental involvement and the importance of enhancing parents’ digital proficiency. Despite limitations due to the language and methodological heterogeneity among the included studies, this research paves the way for future investigations into digital parenting practices.

Full article

Figure 1

Open AccessArticle

MirrorCampus: A Synchronous Hybrid Learning Environment That Supports Spatial Localization of Learners for Facilitating Discussion-Oriented Behaviors

by

Shota Sawada, SunKyoung Kim, Masakazu Hirokawa and Kenji Suzuki

Multimodal Technol. Interact. 2024, 8(4), 31; https://doi.org/10.3390/mti8040031 - 11 Apr 2024

Abstract

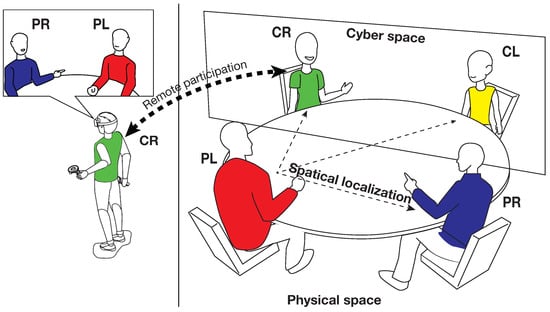

A growing number of higher-education institutions are implementing synchronous hybrid delivery, which provides both online and on-campus learners with simultaneous instruction, especially for facilitating discussions in Active Learning (AL) contexts. However, learners face difficulties in picking up social cues and gaining free access

[...] Read more.

A growing number of higher-education institutions are implementing synchronous hybrid delivery, which provides both online and on-campus learners with simultaneous instruction, especially for facilitating discussions in Active Learning (AL) contexts. However, learners face difficulties in picking up social cues and gaining free access to speaking rights due to the geometrical misalignment of individuals mediated through screens. We assume that the cultivation of discussions is allowed by ensuring the spatial localization of learners similar to that in a physical space. This study aims to design a synchronous hybrid learning environment, called Mirror Campus (MC), suitable for the AL scenario that connects physical and cyberspaces by providing spatial localization of learners. We hypothesize that the MC promotes discussion-oriented behaviors, and eventually enhances applied skills for group tasks, related to discussion, creativity, decision-making, and interdependence. We conducted an experiment with five different groups, where four participants in each group were asked to discuss a given topic for fifteen minutes, and clarified that the occurrences of facing behaviors, intervening, and simultaneous utterances in the MC were significantly increased compared to a conventional video conferencing. In conclusion, this study demonstrated the significance of the spatial localization of learners to facilitate discussion-oriented behaviors such as facing and speech.

Full article

(This article belongs to the Special Issue Designing EdTech and Virtual Learning Environments)

►▼

Show Figures

Figure 1

Open AccessArticle

iPlan: A Platform for Constructing Localized, Reduced-Form Models of Land-Use Impacts

by

Andrew R. Ruis, Carol Barford, Jais Brohinsky, Yuanru Tan, Matthew Bougie, Zhiqiang Cai, Tyler J. Lark and David Williamson Shaffer

Multimodal Technol. Interact. 2024, 8(4), 30; https://doi.org/10.3390/mti8040030 - 10 Apr 2024

Abstract

►▼

Show Figures

To help young people understand socio-environmental systems and develop the confidence that meaningful action can be taken to address socio-environmental problems, young people need interactive simulations that enable them to take consequential actions in a familiar context and see the results. This can

[...] Read more.

To help young people understand socio-environmental systems and develop the confidence that meaningful action can be taken to address socio-environmental problems, young people need interactive simulations that enable them to take consequential actions in a familiar context and see the results. This can be achieved through reduced-form models with appropriate user interfaces, but it is a significant challenge to construct a system capable of producing educational models of socio-environmental systems that are localizable and customizable but accessible to educators and learners. In this paper, we present iPlan, a free, online educational software application designed to enable educators and middle- and high-school-aged learners to create custom, localized land-use simulations that can be used to frame, explore, and address complex land-use problems. We describe in detail the software application and its underlying computational models, and we present robust evidence that the accuracy of iPlan simulations is appropriate for educational contexts and preliminary evidence that educators are able to produce simulations suitable for their pedagogical goals and learner populations.

Full article

Figure 1

Open AccessArticle

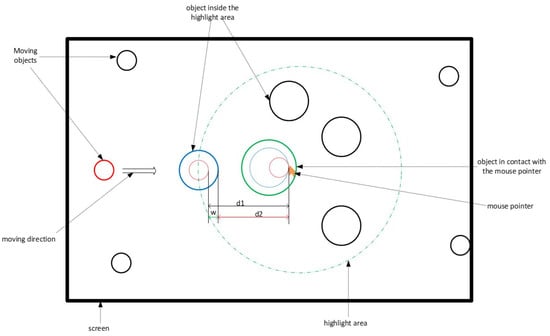

A Two-Level Highlighting Technique Based on Gaze Direction to Improve Target Pointing and Selection on a Big Touch Screen

by

Valéry Marcial Monthe and Thierry Duval

Multimodal Technol. Interact. 2024, 8(4), 29; https://doi.org/10.3390/mti8040029 - 10 Apr 2024

Abstract

►▼

Show Figures

In this paper, we present an approach to improve pointing methods and target selection on tactile human–machine interfaces. This approach defines a two-level highlighting technique (TLH) based on the direction of gaze for target selection on a touch screen. The technique uses the

[...] Read more.

In this paper, we present an approach to improve pointing methods and target selection on tactile human–machine interfaces. This approach defines a two-level highlighting technique (TLH) based on the direction of gaze for target selection on a touch screen. The technique uses the orientation of the user’s head to approximate the direction of his gaze and uses this information to preselect the potential targets. An experimental system with a multimodal interface has been prototyped to assess the impact of TLH on target selection on a touch screen and compare its performance with that of traditional methods (mouse and touch). We conducted an experiment to assess the effectiveness of our proposition in terms of the rate of selection errors made and time for completion of the task. We also made a subjective estimation of ease of use, suitability for selection, confidence brought by the TLH, and contribution of TLH to improving the selection of targets. Statistical results show that the proposed TLH significantly reduces the selection error rate and the time to complete tasks.

Full article

Figure 1

Open AccessArticle

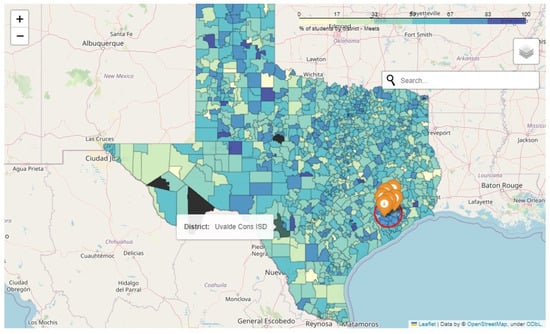

Leveraging Visualization and Machine Learning Techniques in Education: A Case Study of K-12 State Assessment Data

by

Loni Taylor, Vibhuti Gupta and Kwanghee Jung

Multimodal Technol. Interact. 2024, 8(4), 28; https://doi.org/10.3390/mti8040028 - 08 Apr 2024

Abstract

As data-driven models gain importance in driving decisions and processes, recently, it has become increasingly important to visualize the data with both speed and accuracy. A massive volume of data is presently generated in the educational sphere from various learning platforms, tools, and

[...] Read more.

As data-driven models gain importance in driving decisions and processes, recently, it has become increasingly important to visualize the data with both speed and accuracy. A massive volume of data is presently generated in the educational sphere from various learning platforms, tools, and institutions. The visual analytics of educational big data has the capability to improve student learning, develop strategies for personalized learning, and improve faculty productivity. However, there are limited advancements in the education domain for data-driven decision making leveraging the recent advancements in the field of machine learning. Some of the recent tools such as Tableau, Power BI, Microsoft Azure suite, Sisense, etc., leverage artificial intelligence and machine learning techniques to visualize data and generate insights from them; however, their applicability in educational advances is limited. This paper focuses on leveraging machine learning and visualization techniques to demonstrate their utility through a practical implementation using K-12 state assessment data compiled from the institutional websites of the States of Texas and Louisiana. Effective modeling and predictive analytics are the focus of the sample use case presented in this research. Our approach demonstrates the applicability of web technology in conjunction with machine learning to provide a cost-effective and timely solution to visualize and analyze big educational data. Additionally, ad hoc visualization provides contextual analysis in areas of concern for education agencies (EAs).

Full article

(This article belongs to the Special Issue Data Visualization)

►▼

Show Figures

Figure 1

Open AccessReview

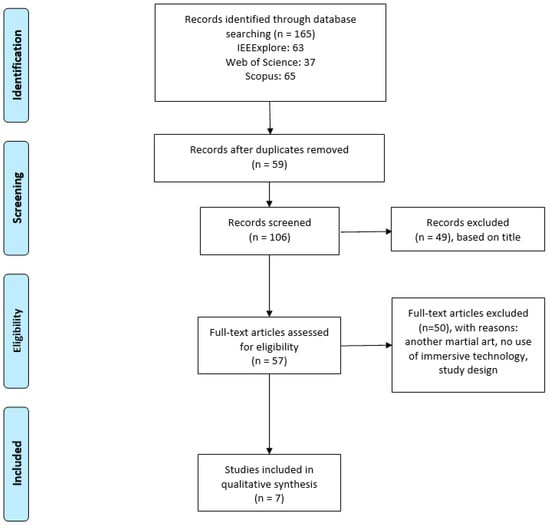

The Use of Immersive Technologies in Karate Training: A Scoping Review

by

Dimosthenis Lygouras and Avgoustos Tsinakos

Multimodal Technol. Interact. 2024, 8(4), 27; https://doi.org/10.3390/mti8040027 - 01 Apr 2024

Abstract

►▼

Show Figures

This study investigates the integration of immersive technologies, primarily virtual reality (VR), in the domain of karate training and practice. The scoping review adheres to PRISMA guidelines and encompasses an extensive search across IEEE Xplore, Web of Science, and Scopus databases, yielding a

[...] Read more.

This study investigates the integration of immersive technologies, primarily virtual reality (VR), in the domain of karate training and practice. The scoping review adheres to PRISMA guidelines and encompasses an extensive search across IEEE Xplore, Web of Science, and Scopus databases, yielding a total of 165 articles, from which 7 were ultimately included based on strict inclusion and exclusion criteria. The selected studies consistently highlight the dominance of VR technology in karate practice and teaching, with VR often facilitated by head-mounted displays (HMDs). The main purpose of VR is to create life-like training environments, evaluate performance, and enhance skill development. Immersive technologies, particularly VR, offer accurate motion capture and recording capabilities that deliver detailed feedback on technique, reaction time, and decision-making. This precision empowers athletes and coaches to identify areas for improvement and make data-driven training adjustments. Despite the promise of immersive technologies, established frameworks or guidelines are absent for their effective application in karate training. As a result, this suggests a need for best practices and guidelines to ensure optimal integration.

Full article

Figure 1

Open AccessArticle

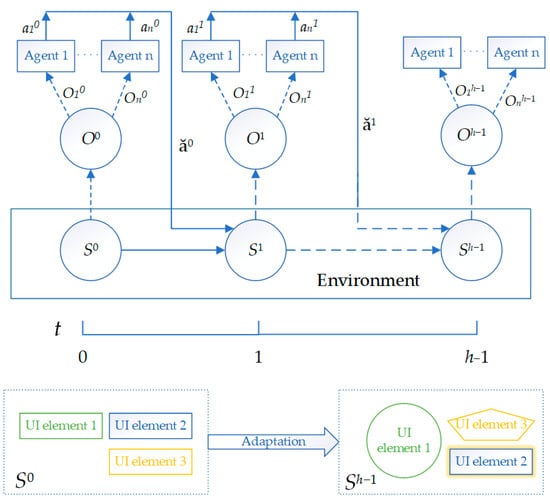

Mobile User Interface Adaptation Based on Usability Reward Model and Multi-Agent Reinforcement Learning

by

Dmitry Vidmanov and Alexander Alfimtsev

Multimodal Technol. Interact. 2024, 8(4), 26; https://doi.org/10.3390/mti8040026 - 24 Mar 2024

Abstract

Today, reinforcement learning is one of the most effective machine learning approaches in the tasks of automatically adapting computer systems to user needs. However, implementing this technology into a digital product requires addressing a key challenge: determining the reward model in the digital

[...] Read more.

Today, reinforcement learning is one of the most effective machine learning approaches in the tasks of automatically adapting computer systems to user needs. However, implementing this technology into a digital product requires addressing a key challenge: determining the reward model in the digital environment. This paper proposes a usability reward model in multi-agent reinforcement learning. Well-known mathematical formulas used for measuring usability metrics were analyzed in detail and incorporated into the usability reward model. In the usability reward model, any neural network-based multi-agent reinforcement learning algorithm can be used as the underlying learning algorithm. This paper presents a study using independent and actor-critic reinforcement learning algorithms to investigate their impact on the usability metrics of a mobile user interface. Computational experiments and usability tests were conducted in a specially designed multi-agent environment for mobile user interfaces, enabling the implementation of various usage scenarios and real-time adaptations.

Full article

(This article belongs to the Special Issue Multimodal User Interfaces and Experiences: Challenges, Applications, and Perspectives)

►▼

Show Figures

Figure 1

Open AccessArticle

Into the Rhythm: Evaluating Breathing Instruction Sound Experiences on the Run with Novice Female Runners

by

Vincent van Rheden, Eric Harbour, Thomas Finkenzeller and Alexander Meschtscherjakov

Multimodal Technol. Interact. 2024, 8(4), 25; https://doi.org/10.3390/mti8040025 - 22 Mar 2024

Abstract

Running is a popular sport throughout the world. Breathing strategies like stable breathing and slow breathing can positively influence the runner’s physiological and psychological experiences. Sonic breathing instructions are an established, unobtrusive method used in contexts such as exercise and meditation. We argue

[...] Read more.

Running is a popular sport throughout the world. Breathing strategies like stable breathing and slow breathing can positively influence the runner’s physiological and psychological experiences. Sonic breathing instructions are an established, unobtrusive method used in contexts such as exercise and meditation. We argue sound to be a viable approach for administering breathing strategies whilst running. This paper describes two laboratory studies using within-subject designs that investigated the usage of sonic breathing instructions with novice female runners. The first study (N = 11) examined the effect of information richness of five different breathing instruction sounds on adherence and user experience. The second study (N = 11) explored adherence and user experience of sonically more enriched sounds, and aimed to increase the sonic experience. Results showed that all sounds were effective in stabilizing the breathing rate (study 1 and 2, respectively: mean absolute percentage error = 1.16 ± 1.05% and 1.9 ± 0.11%, percent time attached = 86.81 ± 9.71% and 86.18 ± 11.96%). Information-rich sounds were subjectively more effective compared to information-poor sounds (mean ratings: 7.55 ± 1.86 and 5.36 ± 2.42, respectively). All sounds scored low (mean < 5/10) on intention to use.

Full article

(This article belongs to the Special Issue Multimodal User Interfaces and Experiences: Challenges, Applications, and Perspectives)

►▼

Show Figures

Figure 1

Open AccessArticle

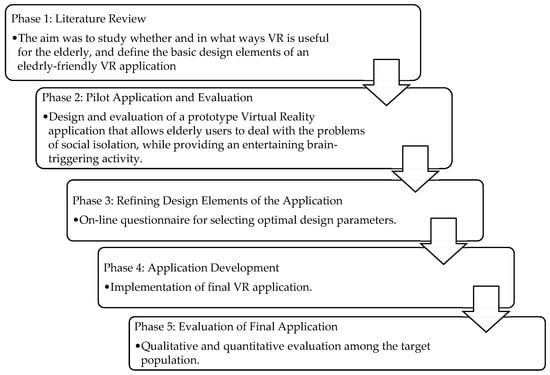

Design and Evaluation of a Memory-Recalling Virtual Reality Application for Elderly Users

by

Zoe Anastasiadou, Eleni Dimitriadou and Andreas Lanitis

Multimodal Technol. Interact. 2024, 8(3), 24; https://doi.org/10.3390/mti8030024 - 21 Mar 2024

Abstract

Virtual reality (VR) can be useful in efforts that aim to improve the well-being of older members of society. Within this context, the work presented in this paper aims to provide the elderly with a user-friendly and enjoyable virtual reality application incorporating memory

[...] Read more.

Virtual reality (VR) can be useful in efforts that aim to improve the well-being of older members of society. Within this context, the work presented in this paper aims to provide the elderly with a user-friendly and enjoyable virtual reality application incorporating memory recall and storytelling activities that could promote mental awareness. An important aspect of the proposed VR application is the presence of a virtual audience that listens to the stories presented by elderly users and interacts with them. In an effort to maximize the impact of the VR application, research was conducted to study whether the elderly are willing to use the VR application and whether they believe it can help to improve well-being and reduce the effects of loneliness and social isolation. Self-reported results related to the experience of the users show that elderly users are positive towards the use of such an application in everyday life as a means of improving their overall well-being.

Full article

(This article belongs to the Special Issue 3D User Interfaces and Virtual Reality)

►▼

Show Figures

Figure 1

Open AccessArticle

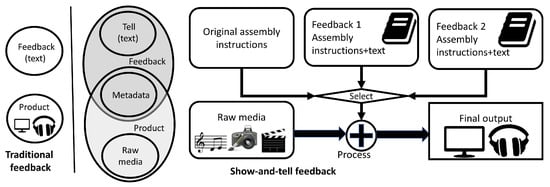

Show-and-Tell: An Interface for Delivering Rich Feedback upon Creative Media Artefacts

by

Colin Dodds and Ahmed Kharrufa

Multimodal Technol. Interact. 2024, 8(3), 23; https://doi.org/10.3390/mti8030023 - 14 Mar 2024

Abstract

►▼

Show Figures

In this paper, we explore an approach to feedback which could allow those learning creative digital media practices in remote and asynchronous environments to receive rich, multi-modal, and interactive feedback upon their creative artefacts. We propose the show-and-tell feedback interface which couples graphical

[...] Read more.

In this paper, we explore an approach to feedback which could allow those learning creative digital media practices in remote and asynchronous environments to receive rich, multi-modal, and interactive feedback upon their creative artefacts. We propose the show-and-tell feedback interface which couples graphical user interface changes (the show) to a text-based explanations (the tell). We describe the rationale behind the design and offer a tentative set of design criteria. We report the implementation and deployment into a real-world educational setting using a prototype interface developed to allow either traditional text-only feedback or our proposed show-and tell feedback across four sessions. The prototype was used to provide formative feedback upon music students’ coursework resulting in a total of 103 pieces of feedback. Thematic analysis was used to analyse the data obtained through interviews and focus groups with both educators and students (i.e., feedback givers and receivers). Recipients considered show-and-tell feedback to possess greater clarity and detail in comparison with the single modality text-only feedback they are used to receiving. We also report interesting emergent issues around control and artistic vision, and we discuss how these issues could be mitigated in future iterations of the interface.

Full article

Figure 1

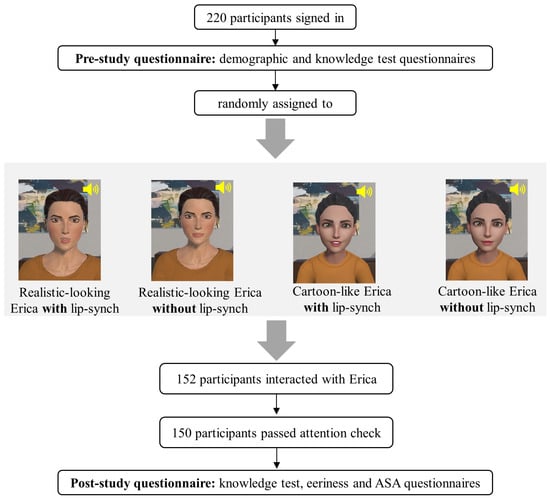

Open AccessArticle

Do Not Freak Me Out! The Impact of Lip Movement and Appearance on Knowledge Gain and Confidence

by

Amal Abdulrahman, Katherine Hopman and Deborah Richards

Multimodal Technol. Interact. 2024, 8(3), 22; https://doi.org/10.3390/mti8030022 - 05 Mar 2024

Abstract

►▼

Show Figures

Virtual agents (VAs) have been used effectively for psychoeducation. However, getting the VA’s design right is critical to ensure the user experience does not become a barrier to receiving and responding to the intended message. The study reported in this paper seeks to

[...] Read more.

Virtual agents (VAs) have been used effectively for psychoeducation. However, getting the VA’s design right is critical to ensure the user experience does not become a barrier to receiving and responding to the intended message. The study reported in this paper seeks to help first-year psychology students to develop knowledge and confidence to recommend emotion regulation strategies. In previous work, we received negative feedback concerning the VA’s lip-syncing, including creepiness and visual overload, in the case of stroke patients. We seek to test the impact of the removal of lip-syncing on the perception of the VA and its ability to achieve its intended outcomes, also considering the influence of the visual features of the avatar. We conducted a 2 (lip-sync/no lip-sync) × 2 (human-like/cartoon-like) experimental design and measured participants’ perception of the VA in terms of eeriness, user experience, knowledge gain and participants’ confidence to practice their knowledge. While participants showed a tendency to prefer the cartoon look over the human look and the absence of lip-syncing over its presence, all groups reported no significant increase in knowledge but significant increases in confidence in their knowledge and ability to recommend the learnt strategies to others, concluding that realism and lip-syncing did not influence the intended outcomes. Thus, in future designs, we will allow the user to switch off the lip-sync function if they prefer. Further, our findings suggest that lip-syncing should not be a standard animation included with VAs, as is currently the case.

Full article

Figure 1

Open AccessArticle

Accessible Metaverse: A Theoretical Framework for Accessibility and Inclusion in the Metaverse

by

Achraf Othman, Khansa Chemnad, Aboul Ella Hassanien, Ahmed Tlili, Christina Yan Zhang, Dena Al-Thani, Fahriye Altınay, Hajer Chalghoumi, Hend S. Al-Khalifa, Maisa Obeid, Mohamed Jemni, Tawfik Al-Hadhrami and Zehra Altınay

Multimodal Technol. Interact. 2024, 8(3), 21; https://doi.org/10.3390/mti8030021 - 01 Mar 2024

Cited by 2

Abstract

The following article investigates the Metaverse and its potential to bolster digital accessibility for persons with disabilities. Through qualitative analysis, we examine responses from eleven experts in digital accessibility, Metaverse development, disability advocacy, and policy formulation. This exploration uncovers key insights into the

[...] Read more.

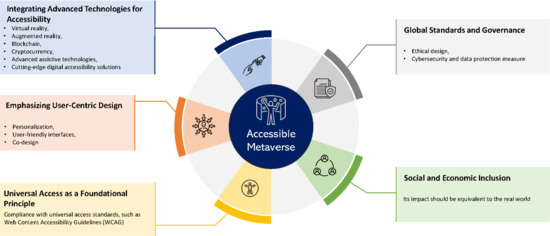

The following article investigates the Metaverse and its potential to bolster digital accessibility for persons with disabilities. Through qualitative analysis, we examine responses from eleven experts in digital accessibility, Metaverse development, disability advocacy, and policy formulation. This exploration uncovers key insights into the Metaverse’s current state, its inherent principles, and the challenges and opportunities it presents in terms of accessibility. The findings reveal a mixed state of inclusivity within the Metaverse, highlighting significant advancements along with notable gaps, especially in integrating assistive technologies and ensuring interoperability across different virtual environments. This study emphasizes the Metaverse’s potential to revolutionize experiences for individuals with disabilities, provided that accessibility is embedded in its foundational design. Ethical and legal considerations, such as privacy, non-discrimination, and evolving legal frameworks, are identified as critical factors that shape an inclusive Metaverse. We propose a comprehensive framework that emphasizes technological adaptation and innovation, user-centric design, universal access, social and economic considerations, and global standards. This framework aims to guide future research and policy interventions to foster an inclusive digital environment in the Metaverse. This paper contributes to the emerging discourse on the Metaverse and digital accessibility, offering a nuanced understanding of its complexities and a roadmap for future exploration and development. This underscores the necessity of a multi-faceted approach that incorporates technological innovation, user-centered design, ethical considerations, legal compliance, and continuous research to create an inclusive and accessible Metaverse.

Full article

(This article belongs to the Special Issue Designing an Inclusive and Accessible Metaverse)

►▼

Show Figures

Graphical abstract

Open AccessArticle

Trust Development and Explainability: A Longitudinal Study with a Personalized Assistive System

by

Setareh Zafari, Jesse de Pagter, Guglielmo Papagni, Alischa Rosenstein, Michael Filzmoser and Sabine T. Koeszegi

Multimodal Technol. Interact. 2024, 8(3), 20; https://doi.org/10.3390/mti8030020 - 01 Mar 2024

Abstract

This article reports on a longitudinal experiment in which the influence of an assistive system’s malfunctioning and transparency on trust was examined over a period of seven days. To this end, we simulated the system’s personalized recommendation features to support participants with the

[...] Read more.

This article reports on a longitudinal experiment in which the influence of an assistive system’s malfunctioning and transparency on trust was examined over a period of seven days. To this end, we simulated the system’s personalized recommendation features to support participants with the task of learning new texts and taking quizzes. Using a 2 × 2 mixed design, the system’s malfunctioning (correct vs. faulty) and transparency (with vs. without explanation) were manipulated as between-subjects variables, whereas exposure time was used as a repeated-measure variable. A combined qualitative and quantitative methodological approach was used to analyze the data from 171 participants. Our results show that participants perceived the system making a faulty recommendation as a trust violation. Additionally, a trend emerged from both the quantitative and qualitative analyses regarding how the availability of explanations (even when not accessed) increased the perception of a trustworthy system.

Full article

(This article belongs to the Special Issue Cooperative Intelligence in Automated Driving- 2nd Edition)

►▼

Show Figures

Figure 1

Open AccessArticle

Enhancing Calculus Learning through Interactive VR and AR Technologies: A Study on Immersive Educational Tools

by

Logan Pinter and Mohammad Faridul Haque Siddiqui

Multimodal Technol. Interact. 2024, 8(3), 19; https://doi.org/10.3390/mti8030019 - 01 Mar 2024

Abstract

In the realm of collegiate education, calculus can be quite challenging for students. Many students struggle to visualize abstract concepts, as mathematics often moves into strict arithmetic rather than geometric understanding. Our study presents an innovative solution to this problem: an immersive, interactive

[...] Read more.

In the realm of collegiate education, calculus can be quite challenging for students. Many students struggle to visualize abstract concepts, as mathematics often moves into strict arithmetic rather than geometric understanding. Our study presents an innovative solution to this problem: an immersive, interactive VR graphing tool capable of standard 2D graphs, solids of revolution, and a series of visualizations deemed potentially useful to struggling students. This tool was developed within the Unity 3D engine, and while interaction and expression parsing rely on existing libraries, core functionalities were developed independently. As a pilot study, it includes qualitative information from a survey of students currently or previously enrolled in Calculus II/III courses, revealing its potential effectiveness. This survey primarily aims to determine the tool’s viability in future endeavors. The positive response suggests the tool’s immediate usefulness and its promising future in educational settings, prompting further exploration and consideration for adaptation into an Augmented Reality (AR) environment.

Full article

(This article belongs to the Special Issue 3D User Interfaces and Virtual Reality)

►▼

Show Figures

Figure 1

Open AccessPerspective

Keep the Human in the Loop: Arguments for Human Assistance in the Synthesis of Simulation Data for Robot Training

by

Carina Liebers, Pranav Megarajan, Jonas Auda, Tim C. Stratmann, Max Pfingsthorn, Uwe Gruenefeld and Stefan Schneegass

Multimodal Technol. Interact. 2024, 8(3), 18; https://doi.org/10.3390/mti8030018 - 01 Mar 2024

Abstract

Robot training often takes place in simulated environments, particularly with reinforcement learning. Therefore, multiple training environments are generated using domain randomization to ensure transferability to real-world applications and compensate for unknown real-world states. We propose improving domain randomization by involving human application experts

[...] Read more.

Robot training often takes place in simulated environments, particularly with reinforcement learning. Therefore, multiple training environments are generated using domain randomization to ensure transferability to real-world applications and compensate for unknown real-world states. We propose improving domain randomization by involving human application experts in various stages of the training process. Experts can provide valuable judgments on simulation realism, identify missing properties, and verify robot execution. Our human-in-the-loop workflow describes how they can enhance the process in five stages: validating and improving real-world scans, correcting virtual representations, specifying application-specific object properties, verifying and influencing simulation environment generation, and verifying robot training. We outline examples and highlight research opportunities. Furthermore, we present a case study in which we implemented different prototypes, demonstrating the potential of human experts in the given stages. Our early insights indicate that human input can benefit robot training at different stages.

Full article

(This article belongs to the Special Issue Challenges in Human-Centered Robotics)

►▼

Show Figures

Figure 1

Open AccessFeature PaperArticle

The FlexiBoard: Tangible and Tactile Graphics for People with Vision Impairments

by

Mathieu Raynal, Julie Ducasse, Marc J.-M. Macé, Bernard Oriola and Christophe Jouffrais

Multimodal Technol. Interact. 2024, 8(3), 17; https://doi.org/10.3390/mti8030017 - 27 Feb 2024

Abstract

►▼

Show Figures

Over the last decade, several projects have demonstrated how interactive tactile graphics and tangible interfaces can improve and enrich access to information for people with vision impairments. While the former can be used to display a relatively large amount of information, they cannot

[...] Read more.

Over the last decade, several projects have demonstrated how interactive tactile graphics and tangible interfaces can improve and enrich access to information for people with vision impairments. While the former can be used to display a relatively large amount of information, they cannot be physically updated, which constrains the type of tasks that they can support. On the other hand, tangible interfaces are particularly suited for the (re)construction and manipulation of graphics, but the use of physical objects also restricts the type and amount of information that they can convey. We propose to bridge the gap between these two approaches by investigating the potential of tactile and tangible graphics for people with vision impairments. Working closely with special education teachers, we designed and developed the FlexiBoard, an affordable and portable system that enhances traditional tactile graphics with tangible interaction. In this paper, we report on the successive design steps that enabled us to identify and consider technical and design requirements. We thereafter explore two domains of application for the FlexiBoard: education and board games. Firstly, we report on one brainstorming session that we organized with four teachers in order to explore the application space of tangible and tactile graphics for educational activities. Secondly, we describe how the FlexiBoard enabled the successful adaptation of one visual board game into a multimodal accessible game that supports collaboration between sighted, low-vision and blind players.

Full article

Figure 1

Open AccessArticle

How to Design Human-Vehicle Cooperation for Automated Driving: A Review of Use Cases, Concepts, and Interfaces

by

Jakob Peintner, Bengt Escher, Henrik Detjen, Carina Manger and Andreas Riener

Multimodal Technol. Interact. 2024, 8(3), 16; https://doi.org/10.3390/mti8030016 - 26 Feb 2024

Abstract

Currently, a significant gap exists between academic and industrial research in automated driving development. Despite this, there is common sense that cooperative control approaches in automated vehicles will surpass the previously favored takeover paradigm in most driving situations due to enhanced driving performance

[...] Read more.

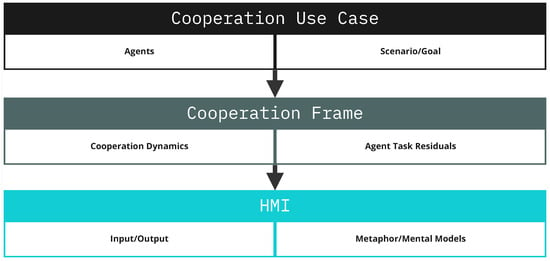

Currently, a significant gap exists between academic and industrial research in automated driving development. Despite this, there is common sense that cooperative control approaches in automated vehicles will surpass the previously favored takeover paradigm in most driving situations due to enhanced driving performance and user experience. Yet, the application of these concepts in real driving situations remains unclear, and a holistic approach to driving cooperation is missing. Existing research has primarily focused on testing specific interaction scenarios and implementations. To address this gap and offer a contemporary perspective on designing human–vehicle cooperation in automated driving, we have developed a three-part taxonomy with the help of an extensive literature review. The taxonomy broadens the notion of driving cooperation towards a holistic and application-oriented view by encompassing (1) the “Cooperation Use Case”, (2) the “Cooperation Frame”, and (3) the “Human–Machine Interface”. We validate the taxonomy by categorizing related literature and providing a detailed analysis of an exemplar paper. The proposed taxonomy offers designers and researchers a concise overview of the current state of driver cooperation and insights for future work. Further, the taxonomy can guide automotive HMI designers in ideation, communication, comparison, and reflection of cooperative driving interfaces.

Full article

(This article belongs to the Special Issue Cooperative Intelligence in Automated Driving- 2nd Edition)

►▼

Show Figures

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Information, Mathematics, MTI, Symmetry

Youth Engagement in Social Media in the Post COVID-19 Era

Topic Editors: Naseer Abbas Khan, Shahid Kalim Khan, Abdul QayyumDeadline: 30 September 2024

Conferences

Special Issues

Special Issue in

MTI

Designing an Inclusive and Accessible Metaverse

Guest Editors: Joel Fredericks, Youngho Lee, Youngjun Cho, Mark Billinghurst, Callum Parker, Soojeong YooDeadline: 20 June 2024

Special Issue in

MTI

Multimodal User Interfaces and Experiences: Challenges, Applications, and Perspectives

Guest Editors: Takumi Ohashi, Di Zhu, Kuo-Hsiang Chen, Wei Liu, Jan AuernhammerDeadline: 30 June 2024

Special Issue in

MTI

Innovative Theories and Practices for Designing and Evaluating Inclusive Educational Technology and Online Learning

Guest Editor: Julius NganjiDeadline: 12 July 2024

Special Issue in

MTI

Multimodal Interaction in Education

Guest Editor: Wajeeh DaherDeadline: 20 August 2024